|

5/17/2023 0 Comments Decision tree entropy

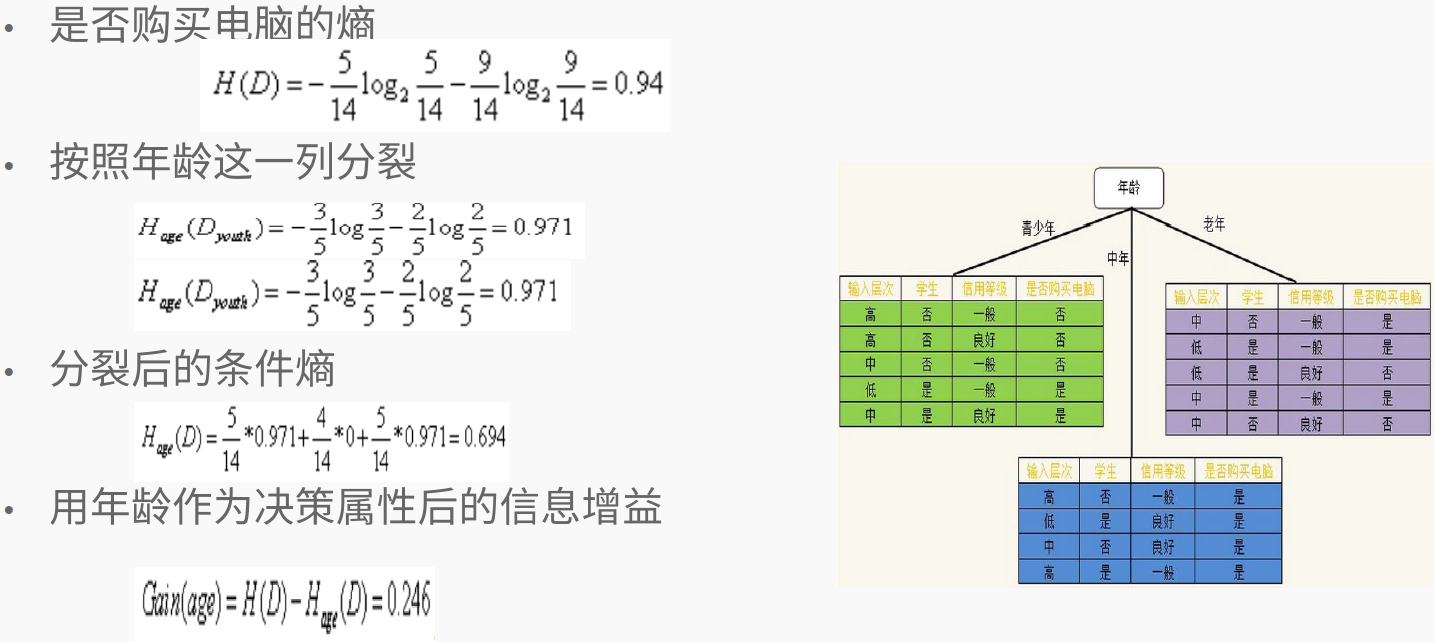

These subsets that complement the Decision Tree features are chosen to achieve greater purity by calculating Entropy. Entropy is calculated in a Decision Tree to optimise it. Now for attribute Y, the Info Gain can be calculated as 1 - entropy of each possible outcome * probability of outcome happening or 1 - 5/10 - 5/10 = 0.029 Decision Tree Algorithms are classification methods used to predict possible, reliable solutions.

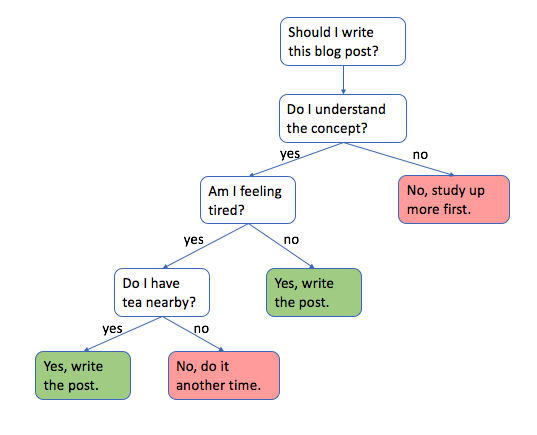

bestrule def informationgain(self, parentnode, feature, rule): parententropy self.entropy(parentnode, parentnode, rule) leftidxs. I.e -(5/10)*log(5/10) - (5/10)*log(5/10) with log taken to the base 2. Ive been asked to code my own decision tree classifier class and I need to visualize the tree after fitting training data on it but when I run plottree from the ee package, it gives me a &. Generally, it gives low prediction accuracy for a dataset as compared to other machine learning algorithms. maxdepth, minsamplesleaf, etc. Notes The default values for the parameters controlling the size of the trees (e.g. It determines how a decision tree chooses to split data. There is a high probability of overfitting in Decision Tree. DecisionTreeRegressor A decision tree regressor. According to the well-known Shannon Entropy formula, the current entropy is. For computational reasons, we have already extracted a relatively clean subset of the data for this HW. A decision tree is a flowchart-like structure in which each internal node. Which would be the sum of negative logs of result being +1 and 0. Entropy is the measurement of disorder or impurities in the information processed in machine learning. 4 Programming exercise : Applying decision trees and k-nearest neighbors 50 pts Introduction 1 This data was extracted from the 1994 Census bureau database by Ronny Kohavi and Barry Becker. Now here, I believe the first step is to calculate the Entropy of the original dataset, in this case, Result. Let me use this as an example: Attr Y AttrX Result I am finding it difficult to calculate entropy and information gain for ID3 in the scenario that there are multiple possible classes and the parent class has a lower entropy that the child.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed